The Future of Multimodal Annotation for Next Generation AI Systems

Multimodal annotation is redefining AI by enabling systems to understand text, audio, video, and context together just like humans. Next-gen AI success depends on synchronized data, domain expertise, and hybrid human-AI workflows to handle real-world complexity. Discover how high-quality, context-rich data is becoming the key to building intelligent, reliable, and production-ready AI systems.

When we talk about Artificial Intelligence, we often imagine a sleek, all-knowing brain that effortlessly makes sense of the world. But if you peek under the hood of the most impressive AI systems driving innovation today, the ones that can see a complex surgical procedure, hear the emotional context in a customer’s voice, and read a 50-page legal contract all at once, you’ll find that their foundation isn't magic. It's built on a foundation of meticulously structured, expertly curated data. More specifically, a foundation of Multimodal Annotation.

In the foundational days of machine learning, we taught models much like we teach toddlers with flashcards. We showed them a simplified, one-dimensional representation of a concept. This is a cat (image), This is the word 'Apple' (text), This approach was straightforward and effective for creating narrow AI, models that were exceptional at one specific task, like cataloging photos or translating a sentence.

But the next generation of AI isn’t interested in flashcards; it is learning to experience the world exactly the way we do: messy, sensory, and deeply interconnected. A next-gen system doesn't just process a video stream. It processes the context of the video, which includes the words spoken, the tone of the voice, and the background environment. This is the new standard of intelligence, and it requires a complete rethink of how we prepare our data.

At Globik AI, we’ve observed this shift firsthand. Enterprises are no longer approaching us asking for standard bounding boxes or simple transcription. They are demanding intelligence synchronization. As we move further into the late 2020s, the Future of Multimodal Annotation is no longer about just scaling up the volume of labeling, it’s about a qualitative shift toward creating complex, context-rich datasets.

Why "Multimodal" Is No Longer Optional

To understand the trajectory of AI, we must accept a simple truth: the world does not communicate in a single modality.

Think of a simple conversation. A person says the phrase, That’s just great. If your AI model only reads the text, it might logically classify the statement as positive or appreciative. If that same model only hears the audio, it might pick up on the dripping sarcasm in the tone. If it only sees the video, it might catch the physical eye-roll that definitively confirms the frustration.

A Next Generation AI system, however, must process all three streams (text, audio, and video) simultaneously to synthesize the correct answer: that the person is actually upset. This is the essence of multimodal AI. The model must have eyes, ears, and reading comprehensionthat are all communicating perfectly with each other.

To train such a system, you cannot simply label the video, transcribe the audio, and then glue them together at the end. That approach creates massive gaps in understanding. You have to synchronize them from the ground up, labeling the relationships, the timing, and the context between those different signals. This is the fundamental challenge, and opportunity, that the industry is racing to solve.

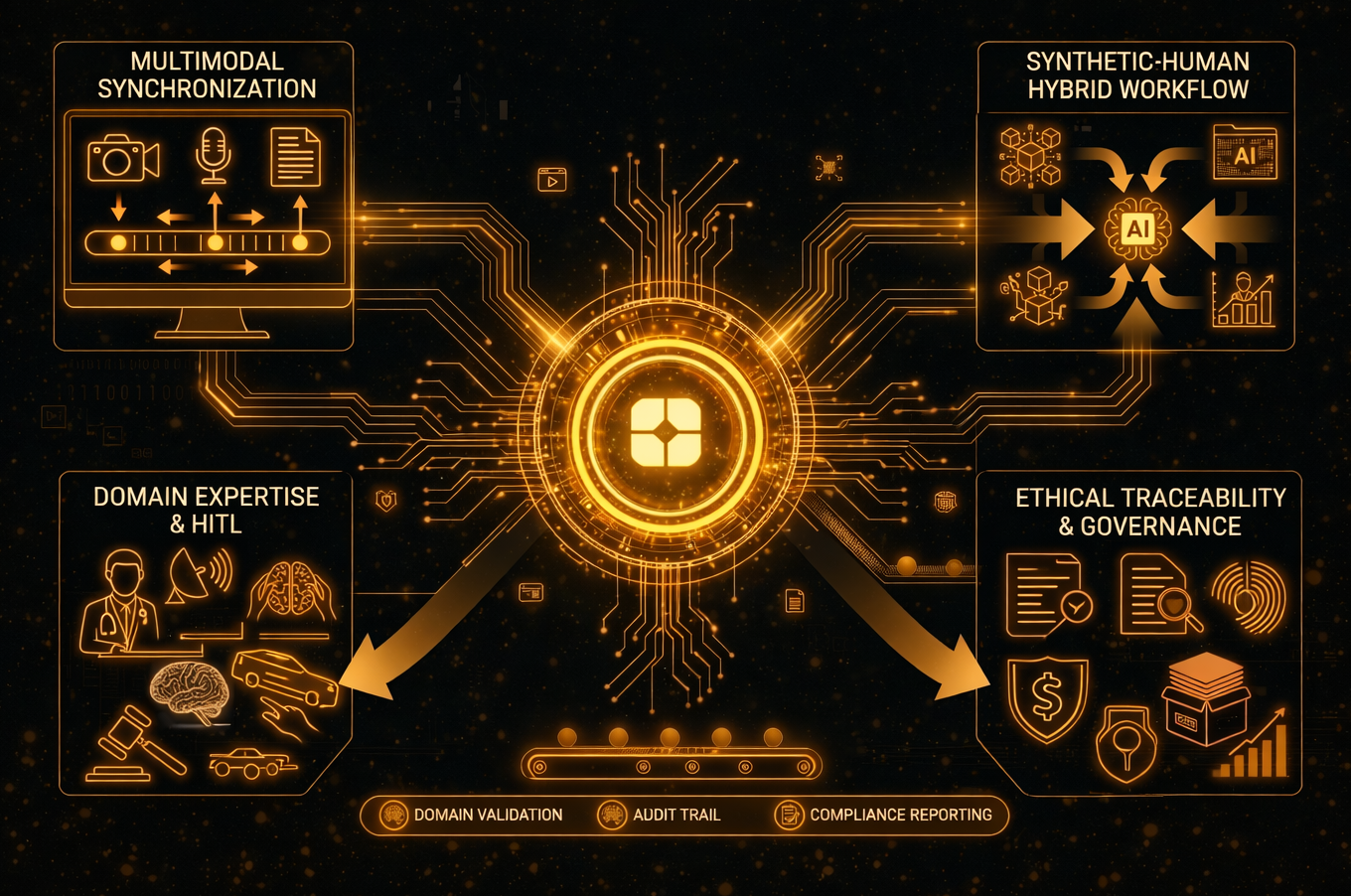

The Four Pillars Defining Next-Gen Multimodal Annotation

The future of annotation is not a continuation of current practices; it’s a technological and operational shift. We at Globik AI have identified four main pillars that define this next phase of development.

1. Temporal and Relational Consistency (Moving Beyond the Frame)

In the classic era of computer vision, an annotator might label a car in Frame 1 and another car in Frame 2 of a video. This approach, while functional, missed the essence of time and movement.

The new paradigm requires cross-modal alignment that maintains consistency not just within a single frame, but over the entire duration of an event. In the context of an autonomous vehicle (AV), this is critical. An AV doesn’t just see a red light; it sees a red light and listens to a voice command saying Stop and processes depth data from a LiDAR sensor, all while calculating its own velocity. The annotation process must ensure that the Stop instruction in the audio track is perfectly aligned to the exact microsecond the red light is captured by the camera and the sensor data indicates the car’s position. If these signals are off by even half a second, the AI’s brain becomes hopelessly confused, and a safety system can fail.

The future here is about labeling the relationships between things over time. We are teaching AI to understand complex action sequences and causal links, rather than just static object detection. This means moving from this is a hammer and this is a nail to this is the action of a hammer driving a nail over a 5-second interval.

2. Domain-Expertise (The Death of the "Generalist" Labeler)

For years, the standard approach to data labeling was brute force: find as many people as possible, pay them by the task, and have them draw boxes on screens. This model worked when the questions were simple, like Is this a dog? But AI is growing up, and the questions it needs to answer are now Peer to Peer.

Next-generation AI must operate as a true assistant to highly skilled professionals. For AI to be useful in high-stakes fields like medicine, law, or engineering, the person doing the labeling needs to have an equivalent level of domain expertise. We have moved far beyond the era where anyone with a laptop could effectively label data.

In the realm of Medical Multimodal Annotation, for instance, simple visual recognition is useless. A model needs radiologists to annotate a 3D MRI scan, highlighting subtle anomalies. But it simultaneously needs those annotations to be aligned with the patient’s written medical history, which may contain critical context written in complex medical jargon or even handwritten notes. The annotator must understand both data types at a professional level to link them effectively. At Globik AI, we have internalized a crucial lesson: "Human-in-the-Loop" (HITL) only delivers value if the Human truly understands the complexities of the Loop. The future of annotation relies on an workforce of specialized, certified professionals.

3. The Synthetic-Human Hybrid Workflow (The Fight Against "Model Collapse")

There is currently significant buzz around synthetic data, which is AI-generated data used to train other AI. Some industry analysts even predict that synthetic data will replace human labeling entirely. This view, however, oversimplifies the reality of how AI learns.

Synthetic data is an excellent accelerator. It can create millions of perfect or easy examples of specific concepts. However, AI faces a critical risk known as model collapse. If a large model only learns from data generated by previous models, it eventually loses touch with reality. It forgets the rare, unusual, or chaotic edge cases that make up the real world and begins to hallucinate, generating increasingly absurd and ungrounded outputs.

The future of annotation is a symbiotic synthetic-human hybrid workflow. Synthetic data is used to provide the foundational textbook knowledge in massive volumes, which saves huge amounts of time and cost. But human experts are then brought in to provide the anchors, labeling the highly complex, ambiguous, and rare edge cases that a synthetic generator would miss or misrepresent. Think of it as a master teacher grading a massive pile of homework generated by a very advanced computer. This hybrid approach allows for massive scale while ensuring the model remains grounded in human experience and reality.

4. Ethical Traceability and Comprehensive Governance

With the implementation of the EU AI Act and several key global regulations tightening up, the most important question an AI enterprise can ask isn't What can this model do? but Where did this data come from, and is it ethically sound?

The future of annotation must incorporate complete data governance. Every single label applied to a dataset needs a digital birth certificate. We are quickly moving toward systems where any stakeholder (whether a regulator, auditor, or the company’s own internal legal team) can trace any specific AI decision or bias back to the exact group of human experts who trained that behavior.

This is about accountability. Who defined that specific behavior as unsafe? Who classified that patient symptom as high risk? Ensuring ethical traceability in multimodal datasets, which are inherently more complex, requires robust, secure annotation platforms that track every keystroke, decision pathway, and annotator profile. This pillar isn’t just a nice-to-have; it is becoming a mission-critical requirement for deploying AI in the real world.

Real-World Use Cases: Seeing Is Believing

It’s easy to discuss multimodal perception in theory, but its impact is best understood when you see it solve seemingly impossible, high-value problems. Here are a few ways this sophisticated annotation strategy is playing out across critical industries.

Case Study 1: Transforming the "Confident" Warehouse (Manufacturing & Robotics)

A massive global logistics firm was struggling with their quality control. They used a sophisticated AI-powered system to inspect heavy machinery being returned from remote construction sites. This equipment represents millions of dollars in assets, and missing damage can lead to catastrophic failures. Their old Vision-only AI was failing dramatically. It couldn't reliably differentiate between a shallow visual scratch (purely cosmetic) and a fracture that looked similar but ran deep into the component’s structure.

The Multimodal Solution:

Globik AI was brought in to build a comprehensive data annotation strategy that went far beyond basic vision. We created a workflow that meticulously labeled and synchronized three distinct data streams:

- High-Resolution 8K Video: To see the surface details with clarity.

- Acoustic Sensors: Which captured the signature sound of the engine under load.

- Maintenance Logs (OCR): Historical data that was OCR’d (Optical Character Recognition) to understand the machine’s specific repair and usage history.

By annotating these three signals together, we gave the AI a complete picture. The system learned that a specific visual pattern (the potential scratch) plus a certain clanking sound in the audio log, especially when combined with a specific service history entry, actually meant a loose internal bolt. This was something the camera, working in isolation, could never have possibly diagnosed.

The Result: The multimodal approach reduced the number of false flags by over 40% and, more importantly, caught dozens of critical failures that previously went undetected, saving the company from millions of dollars in unnecessary repairs and potential liability.

Case Study 2: Developing the Empathetic Physician (Healthcare)

A healthcare technology startup wanted to build a Revolutionary AI Doctor’s Assistant. The goal was an assistant that could join a physician during a patient consultation and generate an automated, highly-structured clinical summary of the visit. But the startup quickly realized that just transcribing the spoken words was not nearly enough. For the summary to be accurate and useful, the doctor needed the AI to capture patient nuance: were they hesitant about the diagnosis? Did they seem confused about the instructions?

The Multimodal Solution:

We deployed a team of medically-trained annotators and linguists to label the consultation data. Crucially, they did not just transcribe what was said. They were tasked with tagging the emotional context and physical signals:

- Sentiment and Tone: Tagging the audio when the patient’s tone indicated uncertainty, even if their words were positive.

- Non-Verbal Cues: Correlating the video stream to tag moments of confusion, such as the patient shrugging, looking away, or furrowing their brow while the doctor was explaining a complex treatment.

The Result: The AI assistant can now generate a clinical note that says: Patient confirmed understanding of treatment plan but showed visual and acoustic markers of confusion. Recommend simplified follow-up. This isn't just an automated scribe; it is an intelligent, emotionally-aware assistant that actively improves the quality of care and patient communication.

Industry Happenings and Benchmarking the Ecosystem

To truly understand the Future of Multimodal Annotation, we must look at what the industry standard-bearers are doing. The marketplace for annotation services is experiencing a profound contraction and evolution.

The marketplace has realized that Data Labeling is a commoditized skill, but Model Alignment is the future of enterprise value. We are moving from a standard of simple correctness (Is this a dog?) to a standard of advanced utility (Is this answer helpful, honest, and harmless?).

This shift places an immense cognitive demand on human annotators. Industry leaders are now dedicating huge resources to a technique called RLHF (Reinforcement Learning from Human Feedback), particularly in the development of Large Language Models (LLMs). In RLHF, humans act as judges, ranking multiple AI-generated responses across a variety of criteria (helpfulness, tone, safety, accuracy).

This isn't simple labeling; it's high-level human reasoning and decision-making. We are teaching the models how to think and reason, not just what to recognize. If your data provider is still focused purely on high-volume bounding boxes and not on reasoning and logic annotation, you are training your models with obsolete methodologies.

How to Future-Proof Your Data Strategy

For AI Leads, CTOs, and Product Managers plotting a path for the next five years, getting your multimodal data strategy right is the single biggest determinant of your model’s success. Here is how you ensure you are prepared for this new landscape:

Audit Your Existing Silos

The first and most important step is organizational data synthesis. Multimodal annotation cannot occur if your video data lives in your Manufacturing database and your text logs live in your Customer Service database. You must audit your internal data storage and build a unified data lake where relevant data streams can be synthesized and aligned.

Prioritize Quality Over Quantity

In the new paradigm, the ancient mantra of computer science Garbage In, Garbage Out has evolved. It is now Average In, Halucination Out. The high costs of training next-gen models mean that you cannot afford to waste your compute budget on weak, poorly-annotated data. You are far better off starting with 10,000 expert-verified multimodal samples than 10,000,000 low-quality single-modality labels that lack depth and context.

Build Partnerships with Specialized Human Capital

Do not wait until you have the model architecture built to find the team that will annotate the data. If you are building AI for healthcare, legal, or automotive, you must find annotation partners who have already built and vetted a workforce with specific credentials in those fields. Building these relationships now is critical to avoiding massive delays in your model development timeline.

Leverage Proprietary Platforms and APIs

Do not try to roll your own annotation tools for complex multimodal data. At Globik AI, we have invested years of engineering into our iTera platform, which is designed explicitly to handle the synchronization of video, audio, and sensor data. Using established APIs and platforms ensures that your data isn't just labeled but is accurately synthesized and aligned with industry standards for formatting and governance.

The Globik AI Perspective: Building the Bridge

At Globik AI, we are firm believers that the true Future of AI isn’t just a race to build the model with the largest number of parameters. It is a race to build the system with the best, most humanly-accurate data.

The next generation of AI systems will be defined not by their raw power, but by their ability to perceive the world with nuance, empathy, and context. We are creating models that can sense if a patient is truly in pain, understand the delicate sarcasm in a complex document, or identify a faulty sensor in a piece of critical infrastructure just by listening.

To do that, we aren’t just moving pixels around a screen or transcribing speech into text. We are building the profound connective tissue that allows AI to comprehend the full picture. Multimodal annotation is the fundamental bridge that takes AI out of the realm of experimental lab project and moves it into the realm of production-ready, dependable intelligence that can be trusted with our businesses, our infrastructure, and our health.

The future of intelligence is already here, and it is looking, listening, and learning. It is seeing the world with the same complexity and nuance that you and I do. The only question is: is your data ready to teach it?

Want to explore how custom, domain-expert multimodal annotation can transform your specific enterprise application? Talk to an expert at Globik AI today and let’s start building your model’s foundation.