How Data Annotation Outsourcing Is Becoming Strategic Not Tactical

In the era of Multimodal AI and RLHF, "cheap" data has become the most expensive mistake a company can make. Explore how data annotation has evolved from a tactical commodity into a high-stakes strategic asset, and why securing a domain-expert partner is now the primary driver of model safety, regulatory compliance, and market dominance.

In the early days of Artificial Intelligence, data annotation was often treated like a digital assembly line: a repetitive, manual task where the only goal was to hit a volume target at the lowest possible price. If you needed 10,000 bounding boxes for a self driving car algorithm, you simply looked for the cheapest hands to draw them. It was a purely tactical move, a box to be checked on the way to development.

But as we navigate 2026, the ground has shifted permanently. The rise of Large Language Models (LLMs), Multimodal AI, and the global demand for Responsible AI has turned data from a commodity into a high stakes strategic asset. Today, outsourcing data annotation is not just about saving money; it is about securing a competitive edge, ensuring model safety, and accelerating time to market.

At Globik AI, we’ve seen this transformation firsthand. Our clients aren't just looking for "labels"; they are looking for partners who understand the nuances of their industry, from healthcare diagnostics to complex financial fraud detection.

In this deep dive, we’ll explore why data annotation outsourcing has moved from the back office to the boardroom and how it’s becoming the backbone of modern AI strategy.

The 2026 Reality: Why "Cheap" Data is the Most Expensive Mistake

The AI ecosystem has changed. In 2020, you could get away with high-volume, low-cost labeling because we were mostly solving simple computer vision or basic sentiment analysis tasks.

Today, the stakes are different. We are dealing with:

- Multimodal Complexity: Modern models do not just see an image; they process a synchronized stream of LiDAR, thermal, and sensor data. Labeling these disparate sources so they align perfectly in time and space requires a level of precision that a low cost, generalist workforce simply cannot provide. When data is misaligned by even a fraction of a second, the resulting model can fail in catastrophic ways.

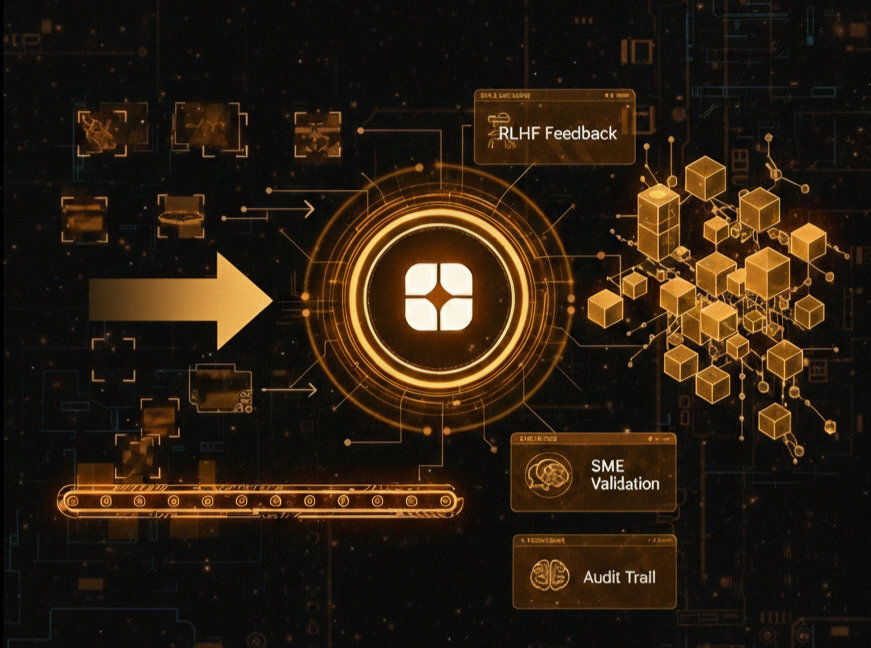

- RLHF (Reinforcement Learning from Human Feedback): In the age of LLMs, the labeler has evolved into a teacher. Through RLHF, humans guide models toward truthfulness, safety, and helpfulness. This is not a binary task of right or wrong; it is a task of nuance and reasoning. If the person providing feedback lacks the cognitive depth to identify subtle hallucinations or logical fallacies, the model will inherit those flaws.

- The Regulatory Wall: With frameworks like the EU AI Act in full force, black box data sourcing is a massive legal liability. Companies must now prove the provenance of their training data. If your data partner does not understand the intent behind your model, they will provide accurate labels that are contextually useless. This is what we call the Hidden Wall, the point where a model stops improving because its training data lacks the necessary depth and transparency.

If your data partner doesn't understand the intent behind your model, they will provide "accurate" labels that are contextually useless. That is the "Hidden Wall."

From Tactical Vendor to Strategic Partner

What does it actually mean to move from a tactical approach to a strategic one? It comes down to three core shifts in how we handle data at Globik AI.

1. SME-Driven Intelligence vs. Generalist Crowds

A generalist crowd can tell you if there is a "car" in a photo. But they cannot tell you if a legal clause in a 50 page contract is high-risk, or if a specific pixel in a medical scan indicates an early-stage anomaly.

Strategic outsourcing means involving Subject Matter Experts (SMEs). At Globik AI, we emphasize Domain-specific labeling. Whether it’s Healthcare, BFSI, or Autonomous systems, the people touching your data must understand the consequences of a mistake. If the annotator doesn't understand the domain, the model never will.

2. The iTera Bridge: Owning the Feedback Loop

Tactical labeling is a one-way street: you send data, they send back labels. Strategic partnership is a loop.

This is why we developed iTera. It isn't just a platform for drawing boxes; it’s a bridge for Active Learning. Instead of labeling 100% of a dataset, much of which is redundant, we use iTera to identify the "edge cases", the 10% of data where the model is most uncertain.

By focusing human expertise on these high-impact samples, we don't just reduce costs; we increase the model’s "intelligence density."

3. Data Governance as a First-Class Citizen

In the past, "provenance" was an afterthought. Today, it is a requirement for AI Data Governance. Strategic partners provide more than labels; they provide an audit trail.

- Who labeled this data, and what were their specific qualifications?

- How was bias identified and mitigated in this specific batch?

- Is this data fully compliant with regional privacy laws and ethical guidelines?

The Friction We Don’t Talk About: Edge Cases and Human Nuance

The most dangerous assumption in AI development is that data is "solved."

Consider the "long tail" of edge cases. In autonomous mobility, a model might see a billion miles of highway, but it’s the one time it sees a person in a dinosaur costume crossing the street at dusk that determines if the system is safe.

A tactical vendor will label that person as a "pedestrian" and move on. A strategic partner will flag that as a critical edge case, realize the model’s confidence score is low, and work with your engineering team to generate or curate more high-variance data to "fortify" that weakness.

This is the difference between a vendor who follows instructions and a partner who shares your goal of production-level reliability.

The Quality Paradox: Why Scaling Requires Less Data, Not More

There is a common misconception that more data always equals a better model. In the strategic era, we have found the opposite to be true: Quality trumps quantity. When you move away from the tactical mindset, you stop trying to feed the model millions of low quality, noisy data points. Instead, you curate a Gold Standard dataset.

Strategic outsourcing allows you to build these high fidelity datasets that act as the final exam for a model before it is granted access to live customers. This approach not only saves on computational costs during training but also results in a model that is more robust and less prone to drifting once deployed.

The Future: Model Evaluation and Safety

As we look toward the next year, the "Strategic Pillar" will expand even further into Model Evaluation and Benchmarking. We are moving into a phase where "Human-in-the-loop" isn't just for training; it’s for safety and alignment. This includes:

- Red Teaming: Proactively trying to lead an LLM into unsafe or biased territory to build better guardrails. This requires creative, adversarial thinking that low cost vendors are not incentivized to provide..

- Bias Auditing: Using diverse human teams to ensure that a FinTech model is not unintentionally discriminating against specific demographics based on historical data patterns.

- Quality Benchmarking: Ensuring that the model's tone and decision making logic align with the specific cultural and corporate values of the end user.

The Economics of Strategic Outsourcing

While tactical labeling seems cheaper on a spreadsheet, the total cost of ownership (TCO) tells a different story. Poorly labeled data leads to:

- Model Retraining Costs: Having to restart the training process because of corrupted or biased datasets.

- Delayed Time to Market: Spending months cleaning data that should have been correct the first time.

- Legal and Reputational Risk: Deploying a biased or unsafe model that violates regulations or loses customer trust.

Strategic outsourcing with Globik AI minimizes these hidden costs, providing a predictable path to a production ready model.

Conclusion: The Soul of Your AI

Your model’s architecture is its brain, but your data is its experience. If that experience is shallow, biased, or poorly interpreted, the brain will fail no matter how many billions of parameters it has.

At Globik AI, we don't view ourselves as a service provider at the end of a supply chain. We view ourselves as the foundation. We are here to ensure that when your model hits the real world, it doesn't just function it thrives with Production-Ready Intelligence.

The era of the tactical label is over. The era of strategic data integrity has begun. It is time to stop buying labels and start building the future of your intelligence.